Test automation has limits. You write test cases to cover specific scenarios. New features require new tests. Edge cases slip through. AI changes this. Intelligent systems learn from existing tests, generate new ones automatically, and adapt testing strategies based on what matters most.

The Evolution of Testing

Manual Testing (Era 1): Humans test everything. Comprehensive but slow. Doesn't scale.

Automated Testing (Era 2): Humans write tests once, automation runs them repeatedly. Fast but limited to scenarios humans anticipate.

Intelligent Testing (Era 3): AI learns from existing tests, generates new ones, prioritizes what to test, and adapts based on results.

AI-Powered Test Generation

How It Works

Traditional: "Test that login form validates email fields." You write 1 test case.

AI-powered: "Here are 10 login form test cases I generated by analyzing your form structure, testing common edge cases, and learning from similar apps."

AI examines your application:

- What form fields exist and their constraints

- How application behaves with valid/invalid input

- Common patterns in your codebase

- How similar applications behave

It generates test cases that cover scenarios humans might miss:

- Email with international characters

- Password with unicode symbols

- Form submission while network is slow

- Multiple simultaneous submissions

Continuous Test Generation

As your app changes, AI-generated tests evolve with it:

- You refactor the login form

- AI detects changes

- Existing tests are automatically updated or new tests generated

Maintenance burden drops significantly.

Intelligent Test Prioritization

The Problem

You have 10,000 test cases. Your test suite takes 6 hours to run. You can't wait 6 hours for every check-in. You run a subset and hope nothing breaks.

AI Solution: Risk-Based Prioritization

AI learns:

- Which tests catch the most bugs historically

- Which tests are most likely to fail given code changes

- Which tests cover critical paths vs edge cases

Instead of running all 10,000, AI suggests: "Run these 500 tests. They cover 95% of critical paths and 80% of historical bugs in these code areas." Down to 30 minutes.

Adaptive Testing Strategies

Learning from Results

AI tracks test outcomes:

- Which tests consistently pass: Less critical to run frequently

- Which tests occasionally fail: Run more frequently

- Which tests always fail in staging but work in production: Investigate why (environment-specific issue)

Over time, testing adapts to your application's actual risk profile.

Dynamic Coverage

Instead of fixed coverage targets ("We test 80% of code"), AI coverage adapts:

- Critical paths: Always fully tested

- New features: Heavily tested initially, less as they stabilize

- Legacy code: Tested enough to catch regressions, not heavily

AI-Powered Bug Prediction

Anticipatory Testing

AI examines code changes and predicts: "This change to the payment processing code has high risk. It touches authentication and database queries, both high-risk areas." The system automatically runs comprehensive payment tests. This happens before code review.

Anomaly Detection

Your app normally has 3-5 bugs per release. This release has 40. AI detects the anomaly: "Something's wrong. Code changes are 10x riskier than normal. Recommend extended testing before release."

Visual Regression with AI

Traditional Visual Testing

Screenshot the page, compare to baseline. If pixels differ, fail. High false positive rate (shadows, animations, minor layout shifts don't matter).

AI-Powered Visual Testing

AI understands visual semantics:

- "This button is now blue instead of green. Intentional change? Yes. Pass."

- "This text is now in a different font size. Intentional change? No. Fail."

- "This shadow is slightly different due to rendering. Not a real issue. Pass."

Far fewer false positives. Tests actually catch regressions.

AI in Test Maintenance

The Problem

Tests become brittle. Application changes. Tests fail. Someone must update them. Time-consuming.

Before AI: App changes → Test breaks → Manual update → Hope it works now

With AI: App changes → Test breaks → AI analyzes the change → Automatically updates test → Verifies it passes

Self-Healing Tests

Tests learn to adapt to application changes automatically. XPath selector no longer works? AI finds the new selector. Button text changed? AI updates the assertion.

This reduces test maintenance overhead by 40-60%.

Real-World Impact

Scenario 1: Mobile App Team Traditional testing took 2 hours for full suite. AI prioritization: 15 minutes for 95% coverage. Feedback loop 8x faster. Developers fix issues faster.

Scenario 2: Enterprise Application New feature added. Developers worried about regression. Manual regression testing would take 3 days. AI-generated tests: done in 3 hours with better coverage.

Scenario 3: Visual Regression Website redesign in progress. Manual visual regression testing: thousands of screenshots to verify manually. AI visual testing: automatic verification with 10x fewer false positives.

Limitations and Considerations

AI Needs Training Data: AI-powered testing works best when there's existing test data and application history. New apps need some manual tests to start.

Domain-Specific Logic: AI can generate tests for typical scenarios but might miss your app's specific business logic. Pair AI with human expertise.

False Negatives: Intelligent prioritization means you skip some tests. Occasionally, a skipped test would have caught a bug. Risk is manageable but non-zero.

The Future of Testing

AI doesn't replace testers. It amplifies them. Testers define what matters ("Security is critical"), and AI handles the mechanical work (generating tests, maintaining them, prioritizing them). Testers focus on strategy and complex scenarios.

Getting Started with AI Testing

- Start with test generation for critical paths

- Try AI-powered prioritization on your existing test suite

- Measure impact: How much faster is feedback? How many bugs slip through?

- Gradually expand to visual regression and self-healing tests

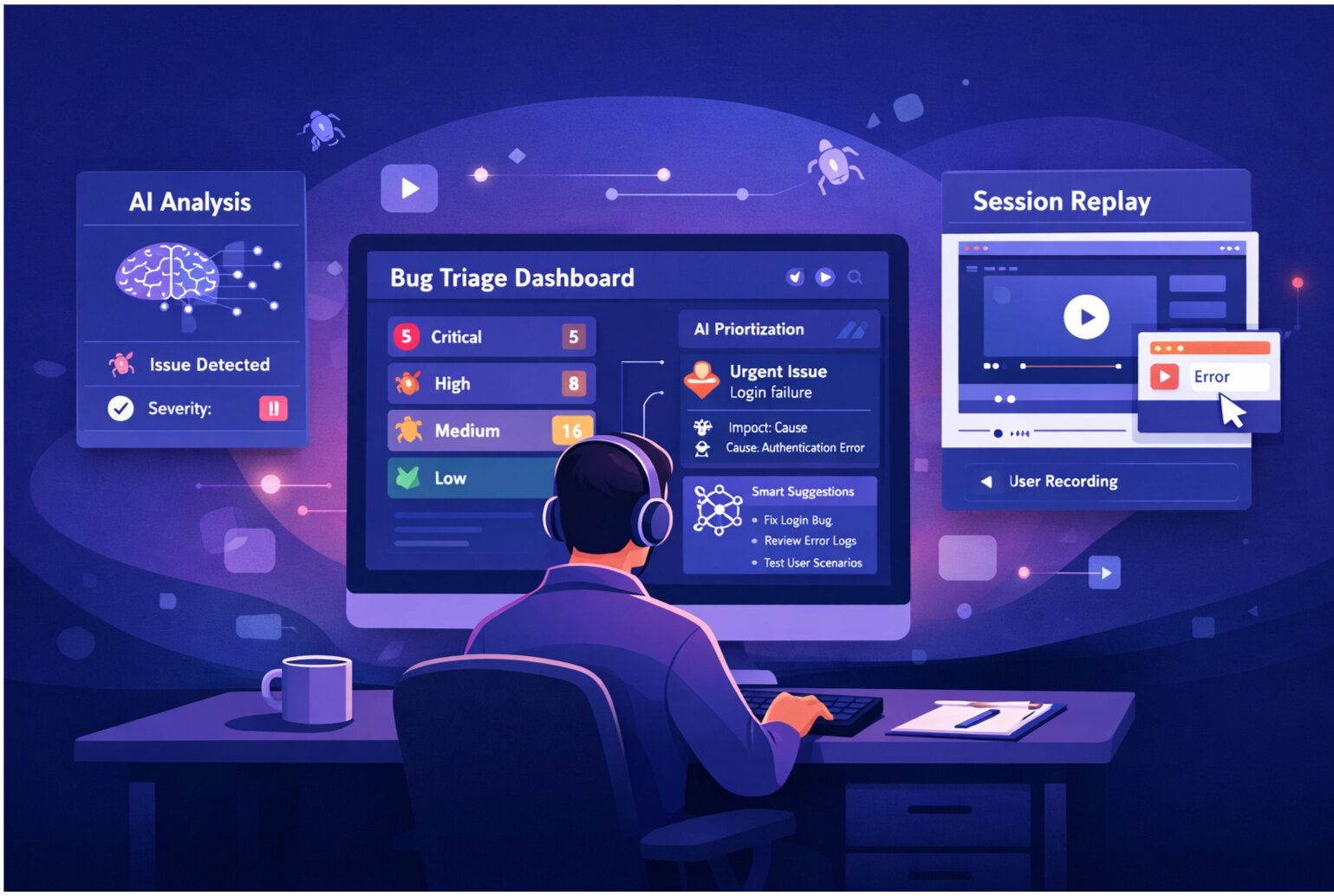

Combine AI triage with intelligent testing. Let AI handle bug prioritization and duplicate detection so QA focuses on finding bugs, not managing them.